The Back Office Tasks You Can't Automate Until You Can See Them

I ask restoration contractors to create mind maps when they tell me they want to automate their back office. It sounds simple. Map out how your company runs, show me the tasks, and we'll teach the AI what needs to happen. The owner knows the business better than anyone. They have the vision. They can draw the picture.

But here's what I've learned from watching this process unfold dozens of times.

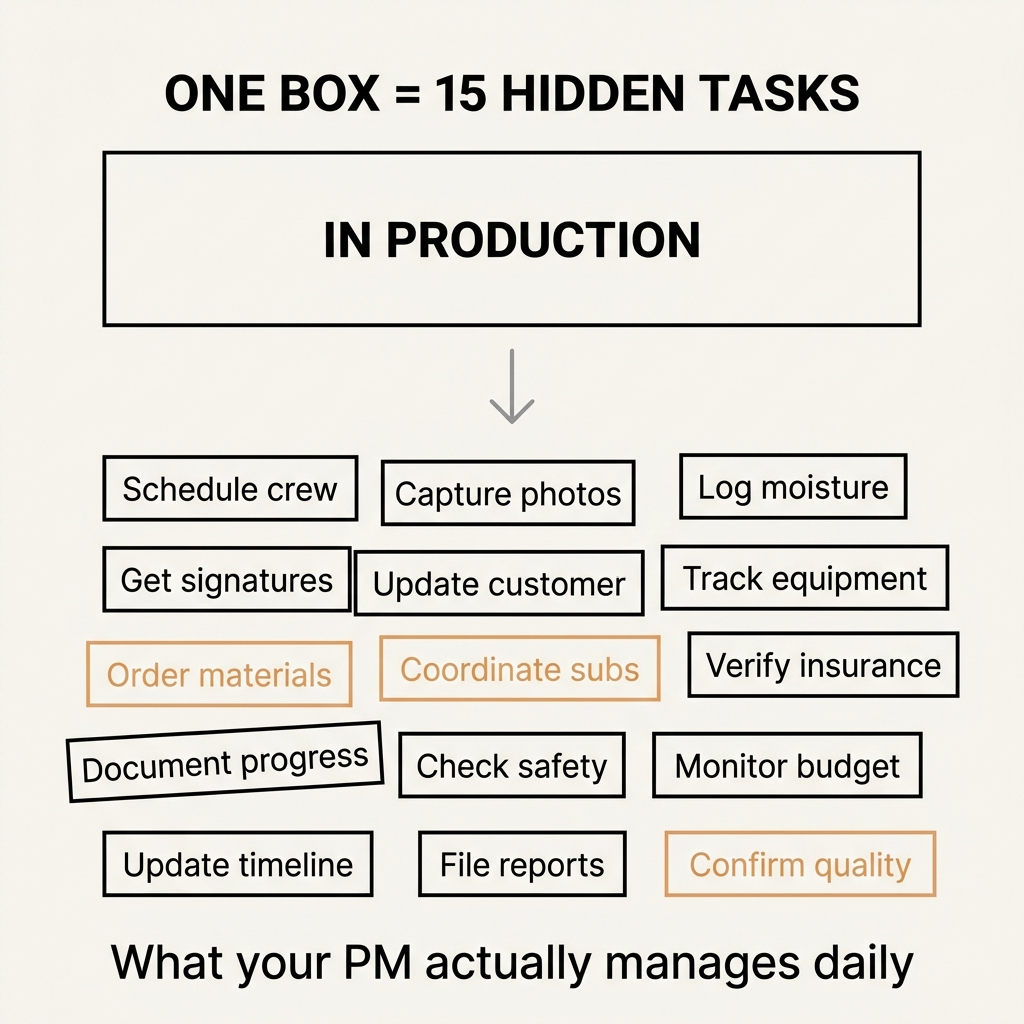

The mind map shows big picture thinking. An owner draws a box labeled "In Production" and considers that task documented. To them, it means the crew is working on the project. The map moves to the next box. Job complete. Invoice sent. Payment received. Clean, linear, manageable.

Then I sit with the project manager.

"In Production" means something entirely different to the person running the work. It means scheduling crew members to get on site. It means someone needs to capture documentation of everything happening that day. Another person logs moisture readings. Someone else confirms all documents are signed before work begins. The customer needs daily updates. The equipment needs tracking. The materials need ordering. The subcontractors need coordinating.

One box on the owner's mind map fragments into fifteen distinct tasks, each requiring a different person, different timing, different verification. None of it was written down anywhere. It existed in the project manager's head, in scattered spreadsheets, in memory, in improvisation.

The Task Inventory Problem Nobody Wants to Admit

Most restoration companies can't list what their back office actually does beyond category labels. Billing. Scheduling. Customer service. Documentation. The words sound like tasks, but they're really task categories containing dozens of micro-processes that nobody has mapped.

This isn't a documentation problem. It's a visibility problem.

Research shows that team members frequently spend time searching for the most up-to-date information on their tasks, and when managers can't see which tasks are completed, delayed, or waiting for action, they lose control of the project entirely. The work happens. Money moves. Jobs get done. But the operational structure underneath remains invisible.

I've watched this create a specific kind of paralysis when contractors start thinking about AI adoption. They can't determine which tasks AI should handle because they've never documented which tasks exist. The conversation stalls before it begins.

The industry data supports what I'm seeing in the field. As of early 2024, only about 1.5% of construction firms were using AI. That number isn't low because the technology doesn't work. It's low because legacy systems with fragmented workflows and inconsistent data can't support AI integration. AI demands structured, interconnected environments. You can't automate chaos.

Where the Owner's Vision Meets Operational Reality

The gap between vision and execution shows up in every restoration company I work with. The owner sees the business in strategic terms. Growth. Market position. Revenue targets. Customer satisfaction. These are the boxes on the mind map.

The people doing the work see something completely different. They see the fifteen-step process hidden inside "billing" that involves matching invoices to job documentation, reconciling equipment costs, verifying labor hours, applying the correct markup, checking insurance requirements, formatting according to carrier specifications, and tracking payment status across multiple systems.

This isn't a failure of leadership. It's a natural consequence of how businesses grow. The owner builds the vision. The team builds the systems to execute that vision. Those systems accumulate over time, layer by layer, workaround by workaround, until the operational reality bears little resemblance to the strategic plan.

I think about this through the lens of what EOS calls the visionary and the integrator. The owner is the visionary. They should be thinking in big picture terms. My role, and the role of operational intelligence tools, is to act as the integrator. Take that big picture and break it into small, manageable chunks that different people can execute through specific tasks.

The problem surfaces when you try to integrate something that was never clearly defined in the first place.

What AI Actually Reveals About Your Operations

AI integration functions as a diagnostic tool before it becomes an automation tool. When you attempt to teach AI which tasks to handle, you're forced to document what those tasks actually are, how they connect to each other, what data they require, and what outcomes they produce.

This process exposes operational debt that human adaptability has been masking for years.

I see this pattern repeatedly. A contractor wants to automate invoice processing. We start mapping the workflow. Within thirty minutes, we've identified seven different people who touch an invoice at various stages, three different systems where invoice data lives, two separate approval processes that sometimes conflict, and four different document formats depending on the insurance carrier.

The humans have been navigating this complexity through institutional knowledge, personal relationships, and constant communication. They know who to ask when the system doesn't match the requirement. They remember which carrier needs which format. They've built workarounds for the gaps.

AI can't do any of that. AI needs clear rules, consistent data structures, and defined decision points. When you try to hand this messy process to AI, the mess becomes visible in a way it never was before.

Research on process visibility confirms this observation. Broken or ineffective processes create significant revenue drain, but often employees supplement a broken step in an automation with their own analog activities. What should be a fast task becomes meandering email chains, hand calculations, and wild-goose chases to track down information. The process appears to work because humans are compensating for the structural problems underneath.

The Repetition Versus Judgment Threshold

Once you've mapped the tasks, you face the next question. Which tasks should AI handle and which need human judgment.

I'm starting to see a pattern emerge across the restoration companies I work with. The threshold isn't about task complexity. It's about task variability.

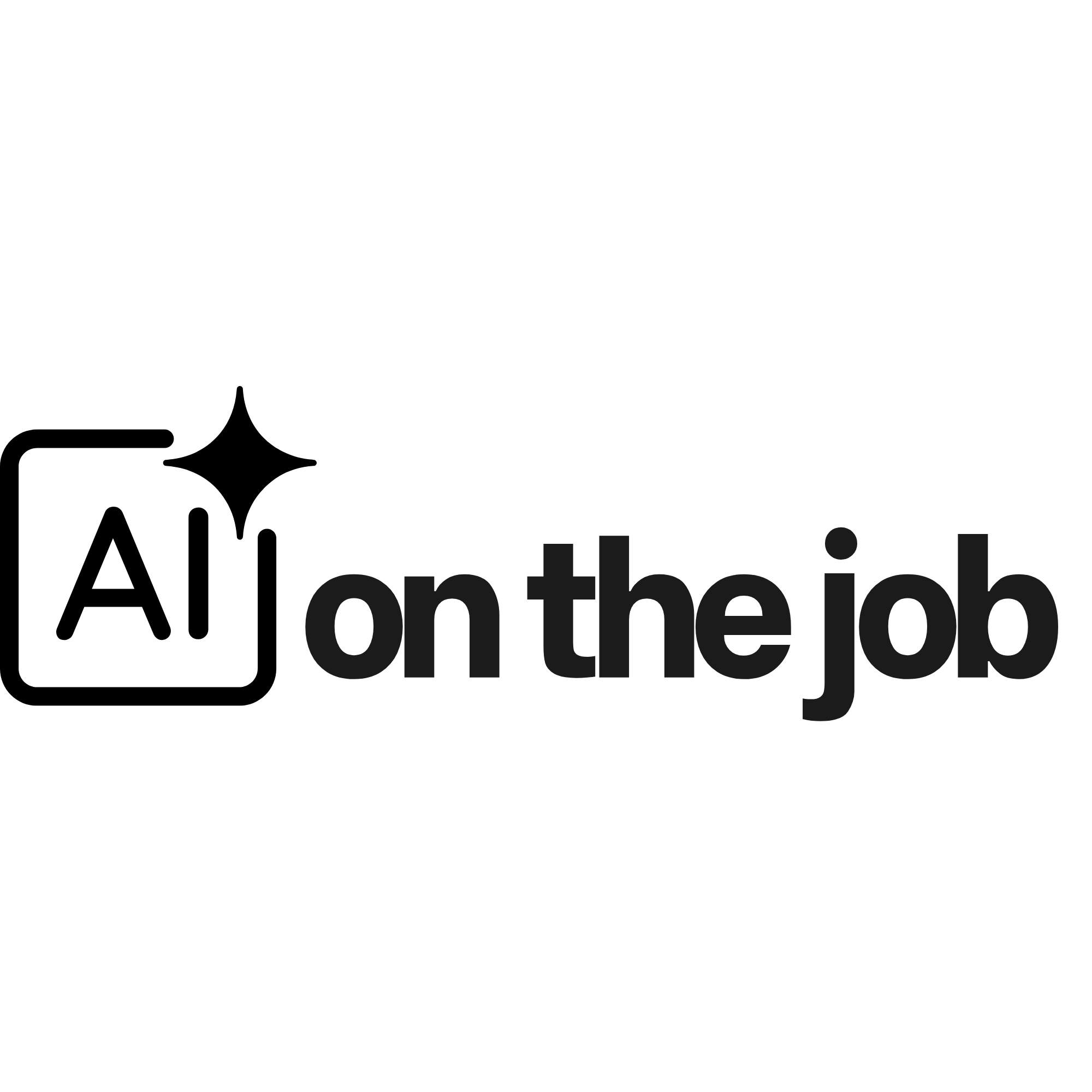

Invoice reconciliation is complex. It requires matching multiple data sources, applying different rules depending on the carrier, and catching discrepancies that could cost thousands of dollars. But it's also highly repetitive. The same logical steps apply to every invoice. The decision tree is complicated but consistent. This is where AI excels.

Customer dispute resolution is also complex, but in a different way. Each dispute involves unique circumstances, emotional dynamics, relationship history, and judgment calls about what the customer actually needs versus what they're asking for. The pattern changes every time. This is where humans remain essential.

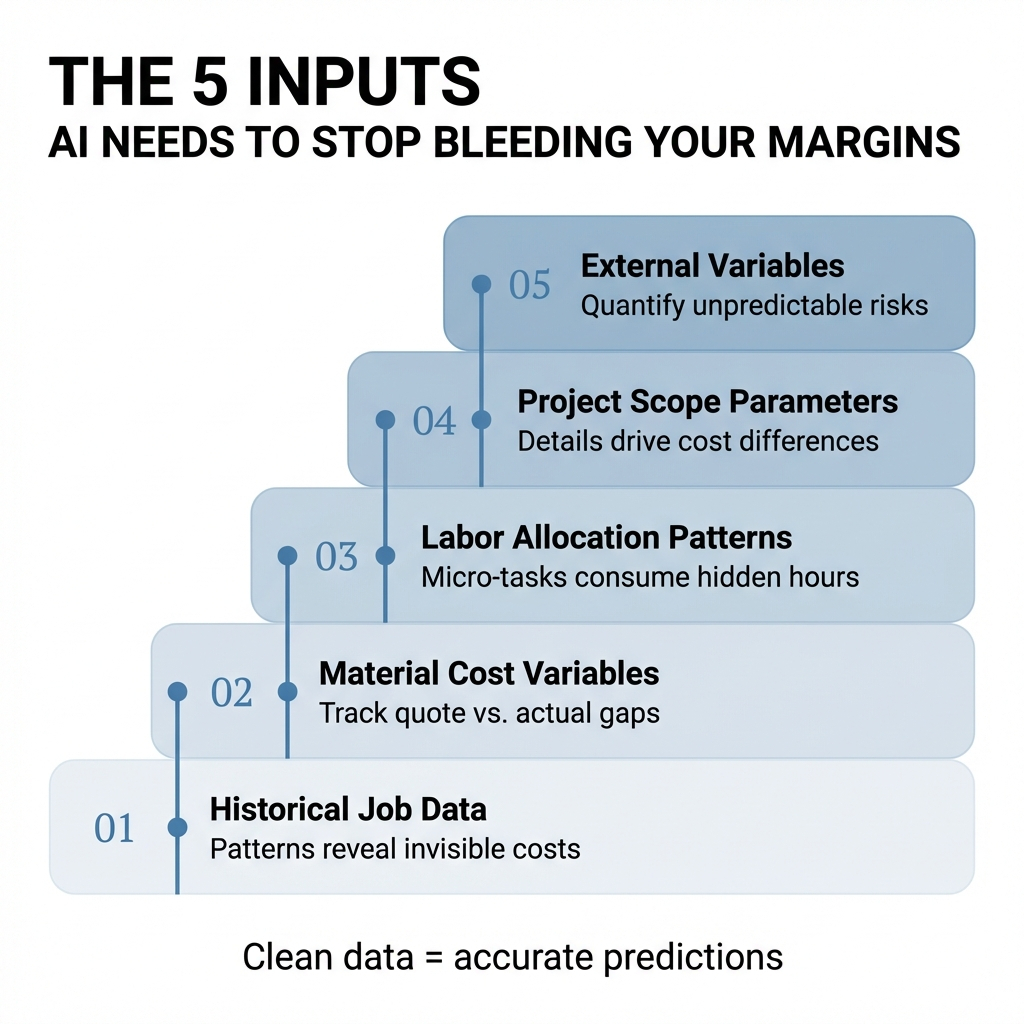

The research supports this division. Studies show that AI automates up to 30% of construction tasks by handling repetitive, time-consuming work like data entry and reporting. This frees teams to focus on strategy, creativity, and delivery. The work that requires adapting to novel situations, reading between the lines, or making judgment calls in ambiguous circumstances stays human.

But here's what the research also reveals. When humans and AI work together on the same task, the results vary dramatically depending on the task type. In specialized decision-making tasks, human-AI combinations often perform worse than either humans or AI alone. But in content creation tasks, the combination produces significantly better results.

This suggests that the integration strategy matters as much as the technology itself. You can't just add AI to existing workflows and expect improvement. You need to redesign the workflow around the strengths of each.

What Actually Happens During the Transition

The 60 to 90 day period when AI starts handling tasks previously done by humans reveals things that nobody discusses in the sales pitch.

The technology works. The AI processes the invoices, generates the tasks, tracks the documentation. But the humans resist in ways that aren't about technology at all.

I've watched back office staff continue using their old spreadsheets alongside the new AI system because they don't trust the AI to catch the exceptions they've learned to watch for over years of experience. I've seen project managers manually verify every task the AI generates because they're not confident it understands the nuances of their specific operation.

This isn't stubbornness. It's rational caution from people who've been burned by technology promises before.

The resistance creates a specific kind of operational debt. You're running two systems in parallel. The old manual process and the new AI process. This doubles the work instead of reducing it. The efficiency gains you expected don't materialize because nobody is willing to let go of the safety net.

Research on AI collaboration shows another dynamic at play. When people transition from working with AI back to working independently, they experience increased feelings of control but also significant decreases in intrinsic motivation and increases in boredom. The AI makes the work easier in the moment, but it also makes the work feel less meaningful.

This suggests that the transition isn't just about training people to use new tools. It's about helping them find new sources of meaning in work that's been fundamentally restructured.

The Skill Shift Nobody Is Preparing For

Back office roles are evolving from task execution to AI supervision, but most small contractors haven't adjusted their hiring or training approaches to match this shift.

The person who used to spend their day entering data and reconciling invoices now needs to spend their day monitoring AI outputs, identifying patterns in the exceptions the AI flags, and making judgment calls about when the AI's recommendation should be overridden.

These are different skills. Data entry requires attention to detail and consistency. AI supervision requires pattern recognition, systems thinking, and the confidence to question a recommendation from a system that's usually right.

I'm seeing this play out in real time as restoration companies implement operational intelligence tools. The staff members who succeed in the new environment aren't necessarily the ones who were best at the old tasks. They're the ones who can shift from executing procedures to evaluating procedures.

The industry research suggests this shift accelerates over the next five to ten years. Site managers increasingly rely on AI-driven insights instead of manually reviewing logs at the end of each day. They get real-time alerts for schedule delays or quality concerns. The role changes from information gathering to decision-making. AI becomes a co-pilot rather than a replacement.

But this transition requires preparation that most contractors aren't doing yet. You can't just hand someone a new tool and expect them to intuitively understand how to supervise an AI. The skills need to be taught, practiced, and reinforced over time.

The Measurement Gap That Prevents ROI Calculation

Contractors ask me how much money AI will save them. I can't answer that question without first knowing how much their current processes cost.

Most don't know.

They know their total labor costs. They know their revenue per job. But they can't tell me how much time their back office spends on invoice reconciliation versus customer communication versus documentation management. They've never timed these processes. They've never tracked the variation between simple jobs and complex jobs. They've never measured how long it takes to resolve an exception versus process a standard transaction.

Without baseline metrics, you can't calculate ROI. You can measure that AI processes invoices faster than humans, but you can't quantify the value of that speed if you don't know what the human speed was or what the human was doing with the time that's now been freed up.

This creates a specific challenge for AI adoption. The technology delivers real value, but that value remains invisible in the same way the original tasks were invisible. You're trading one form of operational opacity for another.

The broader industry data reinforces why this matters. The average restoration company operates with 38% overhead and 3.8% net profit before taxes. More than one-third of companies break even or lose money, according to the RIA's 2024 Cost of Doing Business Report. Labor inefficiency and unbillable labor time cost restoration contractors hundreds of thousands of dollars annually in work that happens between jobs, during travel, or in administrative tasks that never get billed.

AI can recover some of that lost margin, but only if you can identify where the margin is leaking in the first place. The measurement problem precedes the automation problem.

The Incremental Path That Actually Works

I've learned that successful AI adoption in restoration back offices follows a specific sequence. You don't automate everything at once. You start with the tasks that have the cleanest data, the most consistent patterns, and the lowest risk if something goes wrong.

Task generation from status changes tends to work well as a starting point. When a job moves to "In Production," the system automatically creates the fifteen tasks the project manager knows need to happen. This doesn't require perfect data. It just requires a clear trigger and a defined task list. The AI isn't making decisions. It's executing a predetermined workflow.

Once that's stable, you move to documentation tracking. The AI monitors whether required documents have been captured, signed, and filed. It flags missing items before they become problems. This adds decision support without requiring the AI to make autonomous decisions.

The third layer involves pattern recognition across jobs. The AI starts identifying which types of jobs consistently run over budget, which customers generate the most change orders, which crew combinations produce the best efficiency. This is where the operational intelligence becomes genuinely valuable. You're not just automating existing tasks. You're discovering insights that weren't visible in the manual process.

Each layer builds on the previous one. Each layer requires the data quality and process consistency established in the earlier stages. You can't skip steps without creating the kind of operational debt that undermines the entire implementation.

The pattern I'm seeing suggests that restoration companies need 90 to 120 days to stabilize each layer before moving to the next one. This isn't because the technology is slow. It's because the humans need time to build trust, adjust workflows, and develop the supervision skills required for the new environment.

What This Means for Your Back Office

The question isn't whether AI will transform restoration back offices. The technology already works. The question is whether you can create the operational visibility required for AI to function effectively in your specific environment.

This means starting with documentation before automation. Map the tasks that currently exist in institutional knowledge, scattered systems, and improvised workarounds. Break the big picture categories into specific, repeatable processes. Establish baseline metrics for time, cost, and quality.

Then you can begin the actual integration. Not all at once. Not with the expectation that technology alone solves the problem. But incrementally, with attention to how the humans adapt, where the resistance surfaces, and what new skills need development.

The restoration companies that succeed with AI aren't the ones with the most sophisticated technology. They're the ones who've done the operational archaeology required to make their back office visible in the first place.

What tasks are currently invisible in your back office that would need to surface before any meaningful automation could occur?